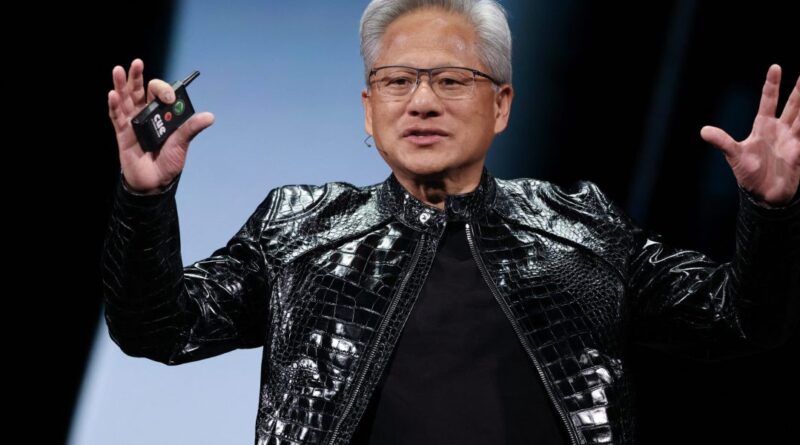

Nvidia CEO Huang: $700B AI Spending Marks Beginning of Massive Expansion

Tech Giants Pour $700 Billion into AI Data Centers This Year—Nvidia’s Leader Insists Peak Is Far Off

Major technology corporations are committing an extraordinary $700 billion in expenditures this year specifically for constructing AI data centers. Jensen Huang, the chief executive officer of Nvidia, asserts confidently that this massive investment represents merely the initial phase of a far more substantial transformation in computing infrastructure.

Huang’s recent statements during Nvidia’s fourth-quarter earnings discussion have ignited debates about whether they signal the zenith of the ongoing AI investment surge—a point often cited in financial history when overconfidence eclipses prudent judgment during speculative booms.

For these remarks to prove prescient rather than prophetic of a downturn, it would necessitate the United States initiating one of the most remarkable economic booms ever recorded, commencing in 2026. Huang evidently subscribes to this optimistic outlook, conveying to shareholders that the hyperscalers’ enormous outlays on artificial intelligence technologies—especially Nvidia’s specialized graphics processing units—are nowhere close to reaching their zenith.

He emphasized, “This revolutionary approach to computing will not revert to previous paradigms.” Enterprises worldwide, he noted, are poised to relentlessly develop and augment their computational capacities moving forward without interruption.

Nvidia reported phenomenal financial performance for the concluding quarter of 2025, propelled by skyrocketing demand for its AI-oriented chips. The company’s revenue surged by an impressive 73%, reaching $68.1 billion, with projections indicating up to a 78% increase in the ongoing quarter.

Despite these outstanding figures, Nvidia’s share price only edged up by less than 1% in after-hours trading, highlighting a core challenge. Over 50% of the firm’s income derives from a handful of dominant hyperscalers—think entities like Google and Amazon—though Nvidia refrained from naming them explicitly. These titans are aggressively acquiring every available Nvidia GPU to populate the enormous AI data centers they are rapidly erecting across the globe.

Numerous hyperscalers have publicly committed to doubling their capital investments in 2026 to accommodate the escalating needs of expanded data center facilities. For instance, Meta, which allocated $72 billion toward capex in 2025, has outlined plans to invest as much as $135 billion this year. Google has similarly announced intentions to allocate up to $185 billion, a substantial leap from the prior year’s $91 billion. Collectively, these leading hyperscalers have earmarked close to $700 billion for capital expenditures throughout the current year.

A pressing concern lingers: How sustainable is this trajectory? These industry behemoths are already surpassing their robust free cash flow generation, resorting to debt issuance to fund the expansive AI infrastructure projects. Should this quintet of companies persist in doubling their annual capex, projections suggest expenditures could balloon to $2.8 trillion by 2028 and escalate further to $5.6 trillion by 2029—a scale that strains credulity without unprecedented returns.

During the earnings call, Wall Street analysts pressed Huang on these dynamics. They inquired about the long-term viability of such spending patterns, whether the remaining 50% of Nvidia’s clientele—beyond the hyperscalers—could sustain the momentum, and what tangible applications would underpin demand for this burgeoning AI infrastructure.

Huang responded with the poise of an educator elucidating fundamental principles. He drew a historical parallel: “Consider that global investments in traditional computing hovered around $300 to $400 billion annually. With AI’s arrival, the requisite computational power has amplified by a factor of 1,000.” Provided the market continues to recognize intrinsic value in these advancements, he argued, investments will scale accordingly to generate the necessary processing output, measured in tokens—the elemental data units handled by AI systems.

Huang elaborated, “The global demand for token generation capacity vastly exceeds the current $700 billion threshold.” He expressed firm conviction that token production will persist, driving continuous investments in enhanced compute resources indefinitely.

On the application front, Huang spotlighted emerging trends like AI agents and innovative tools, which are sparking fresh demand waves. “Agentic AI has hit a pivotal turning point, emerging prominently just in the past two to three months,” he observed. Beyond agentic systems, he foresaw physical AI integrations revolutionizing robotics and manufacturing processes through advanced model deployments.

Huang reiterated with unwavering certainty, “Artificial intelligence has arrived to stay. It will not regress; rather, it will advance progressively from this foundation.” In essence, he portrayed the current investment fervor as merely the opening act, with no signs of abatement on the horizon—at least from his vantage point.

This perspective challenges skeptics who view the $700 billion capex as potentially frothy, underscoring Huang’s belief in AI’s transformative trajectory. The hyperscalers’ aggressive buildouts reflect not just immediate needs but a strategic bet on AI permeating every facet of enterprise operations, from cloud services to edge computing.

Nvidia’s dependency on these few mega-clients amplifies risks; diversification into enterprise, sovereign AI projects, and automotive sectors will be crucial for balancing the revenue stream. Yet Huang’s logic hinges on a virtuous cycle: heightened compute availability fuels AI innovation, which in turn generates demand for even more capacity.

Historical precedents in computing shifts—from mainframes to PCs to cloud—support this narrative, each era demanding exponentially more infrastructure. AI, with its token-based economics, appears poised to dwarf prior paradigms, potentially justifying trillions in sustained investments if productivity gains materialize as anticipated.

Analysts’ concerns about debt-fueled spending are valid, yet hyperscalers’ balance sheets remain fortress-like, bolstered by advertising revenues, e-commerce dominance, and subscription models. Meta’s pivot from metaverse to AI, Google’s search moat, and Amazon’s AWS leadership provide cash flow buffers uncommon in past tech manias.

Huang’s token analogy simplifies complex dynamics but captures AI’s essence: value derives from processing vast data quanta efficiently. As models grow sophisticated, requiring immense training compute, the infrastructure arms race intensifies, benefiting Nvidia’s near-monopoly in high-end GPUs.

Agentic AI—autonomous systems executing multi-step tasks—marks a leap from passive chatbots, demanding real-time inference at scale. Physical AI extends this to embodied intelligence, where robots leverage vision-language models for dexterous manipulation, heralding factory floor revolutions.

Huang’s unyielding optimism contrasts with bubble-watchers, yet Nvidia’s execution—Blackwell chips ramping, software ecosystem maturing—bolsters credibility. If 2026 ushers the foretold boom, today’s capex will be remembered as prescient groundwork for a multi-trillion-dollar AI economy.

Conversely, should demand falter amid macroeconomic headwinds or efficiency gains reducing compute needs, recalibrations loom. For now, Huang’s vision prevails, framing $700 billion as prologue to history’s greatest computing expansion.